The Developer’s Blueprint for Autonomous Data Pipelines: Solving the "Data Debt" Crisis

The Developer’s Blueprint for Autonomous Data Pipelines: Solving the "Data Debt" Crisis

Eliminate "Data Debt" by transforming unstructured PDFs and images into production-ready JSON. Learn how developers use intelligent APIs and automated pipelines to replace fragile manual entry with resilient, autonomous data streams.

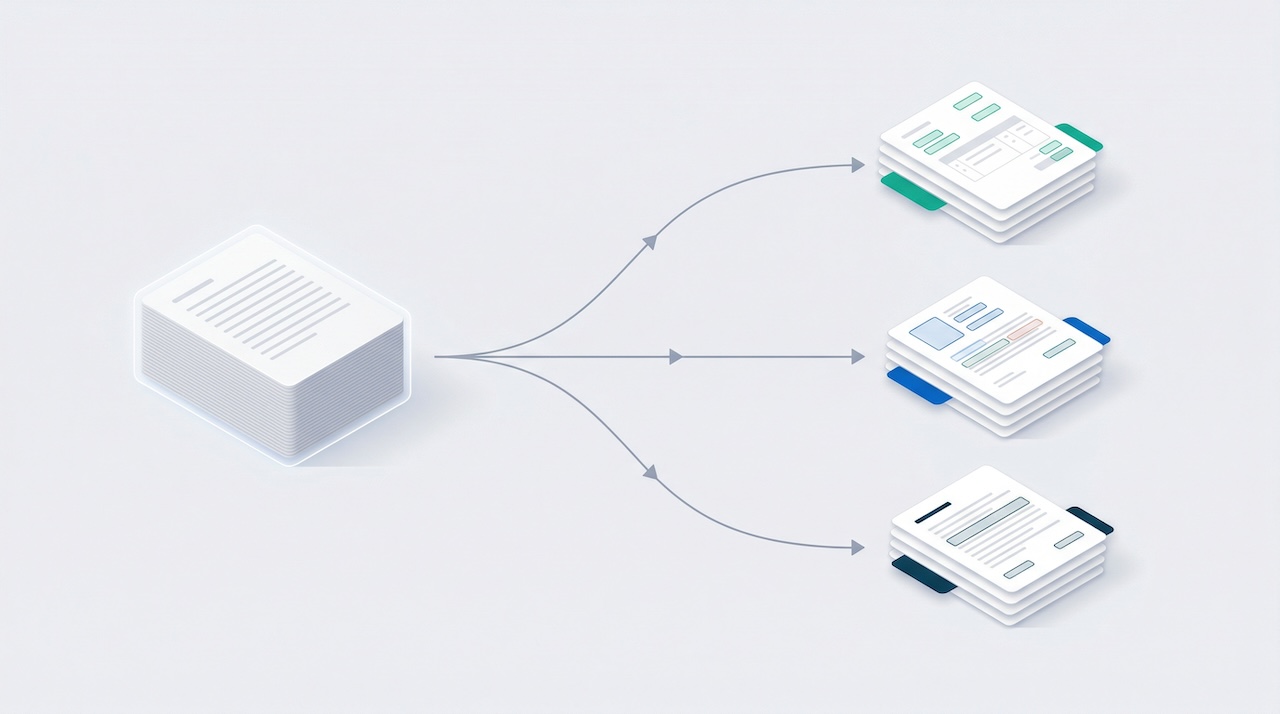

In the modern engineering stack, "Data Debt" is the silent killer of velocity. While your backend systems are built for structured efficiency, the reality of business input remains stubbornly unstructured, trapped in blurry JPGs, multi-page PDFs, and inconsistent ID documents. For tech teams, the manual transcription of this "dark data" isn't just an administrative chore; it is a structural bottleneck that prevents the deployment of truly autonomous systems. To bridge this gap, engineering leads are increasingly integrating intelligent data extraction software to transform visual noise into production-ready, structured JSON.

By treating document processing as an architectural tier rather than a peripheral task, developers can move away from fragile regex patterns and toward resilient, AI-driven data pipelines.

1. The Architectural Shift: Moving Beyond Basic OCR

Traditional Optical Character Recognition (OCR) is essentially a digital photocopier, it recognizes shapes but lacks logic. For a tech team building a scalable data entry automation enterprise solution, basic OCR provides the "what" (text) but fails to provide the "where" and "why" (context).

Comparison: Raw Text vs. Intelligent Extraction

```html

OCR vs. Intelligent Data Extraction

| Capability |

Legacy OCR (Tesseract / Basic Libs) |

Intelligent Data Extraction Software |

| Data Output |

Unstructured String Dump |

Validated JSON / Key-Value Pairs |

| Logic Layer |

Pixel-based recognition |

Semantic LLM-based understanding |

| Layout Resilience |

High failure rate on shifting grids |

Layout-agnostic entity identification |

| Integration |

Heavy local pre-processing required |

RESTful automated data capture API |

| Speed to Production |

Weeks of fine-tuning / training |

Immediate "Plug-and-Play" |

```

Modern intelligent data extraction software utilizes computer vision and Large Language Models (LLMs) to handle "Visual Density." This allows systems to extract nested data from complex tables and recognize "Total Amount" or "Expiry Date" even when they appear in entirely different coordinates across a thousand different documents.

2. The Engineering Choice: Python Libraries vs. Enterprise APIs

When building an extraction pipeline, developers often start with a "Homebuilt" mentality. While Python offers powerful open-source tools, the threshold for moving to a managed data parsing api is often lower than anticipated.

The Developer’s Stack Checklist

- Prototyping (Python Libraries):

- Tesseract: Best for high-contrast, simple text where privacy outweighs the need for high accuracy.

- EasyOCR / PaddleOCR: Excellent for multi-language support (80+ languages) and fast deployment on localized machines.

- The Trade-off: Requires significant dev-hours for image normalization, deskewing, and custom parsing logic.

- Production Scaling (Automated Data Capture API):

- Pre-trained Models: Skip the training phase for common documents like passports, receipts, and invoices.

- Stateless Processing: Ensures security by delivering data without storing sensitive information on the extraction server.

- Structured Delivery: Get a clean JSON payload that can be injected directly into a database or a trigger-based workflow.

For teams managing financial documents, utilizing a specialized invoice ocr api or a bank statement converter allows engineers to focus on core product features rather than the nuances of document variance.

3. High-Velocity Workflows: Building a Frictionless KYC Pipeline

In the compliance space, the biggest technical challenge is "Latency." A 3-day manual verification process for a passport or utility bill is an onboarding disaster. By implementing intelligent data extraction software into your KYC (Know Your Customer) stack, you shift from "Audit Mode" to "Real-time Verification."

The 4-Step Autonomous Identity Pipeline

- Capture & Categorize: Use a document splitter to automatically distinguish between a driver's license, a passport, and a proof of address in a single user upload.

- Entity Extraction: The automated data capture API identifies the Name, ID Number, and Date of Birth in milliseconds.

- Heuristic Validation: The system cross-references the extracted text against user-provided profile data.

- Instant Approval: If the confidence score exceeds the threshold, the user is onboarded immediately; otherwise, it is flagged for a "Human-in-the-Loop" review.

4. Financial Integrity: Scaling the Autonomous Back Office

The goal of the modern tech team is to make the finance department "invisible." This requires a robust ocr invoice api integration that can handle the volume of global scaling without increasing the burden on the accounting team.

- 3-Way Matching: Automatically match the extracted invoice data with Purchase Orders and receiving logs.

- Zero-Entry Reconciliation: Transform stagnant bank PDFs into searchable data, allowing for automated reconciliation against the company ledger.

- Error Mitigation: AI-driven extraction flags duplicate invoices or fraudulent tax IDs before they enter the payment queue.

By utilizing OCR accounting automated workflows, you remove the block from the revenue cycle, allowing the business to scale transaction volume without a proportional increase in administrative headcount.

Conclusion: The Architecture of a "Zero-Click" Future

The transition to intelligent data extraction software is a strategic decision to eliminate technical debt. When you stop treating documents as images and start treating them as data streams, you unlock a new level of organizational velocity.

Whether you are optimizing a KYC pipeline or building a global data entry automation enterprise solution, the mission remains the same: stop "typing" and start "trusting" your automated data layers. By choosing the right automated data capture API today, you ensure that your platform's growth is never limited by the speed of manual entry.